Do we still need RAG if context windows hit 1M tokens?

Long context windows have made the debate legitimate. The answer still depends on what problem is actually being solved.

The problem every LLM has

LLMs are trained on a snapshot of the world. Products built on top of them - ChatGPT, Claude, Perplexity - can search the web, but that is a tool injecting retrieved content into the context window, not the model learning anything new. The model itself cannot reliably access or recall information beyond its training data, especially when it is private, recent, or highly specific. Also nothing about internal documents, proprietary codebases, private wikis, or anything that was never in the training corpus to begin with.

This is the context injection problem. An LLM can only reason about what is in its context window. Getting the right data there, at the right time, is the engineering challenge that RAG was built to solve.

How RAG works

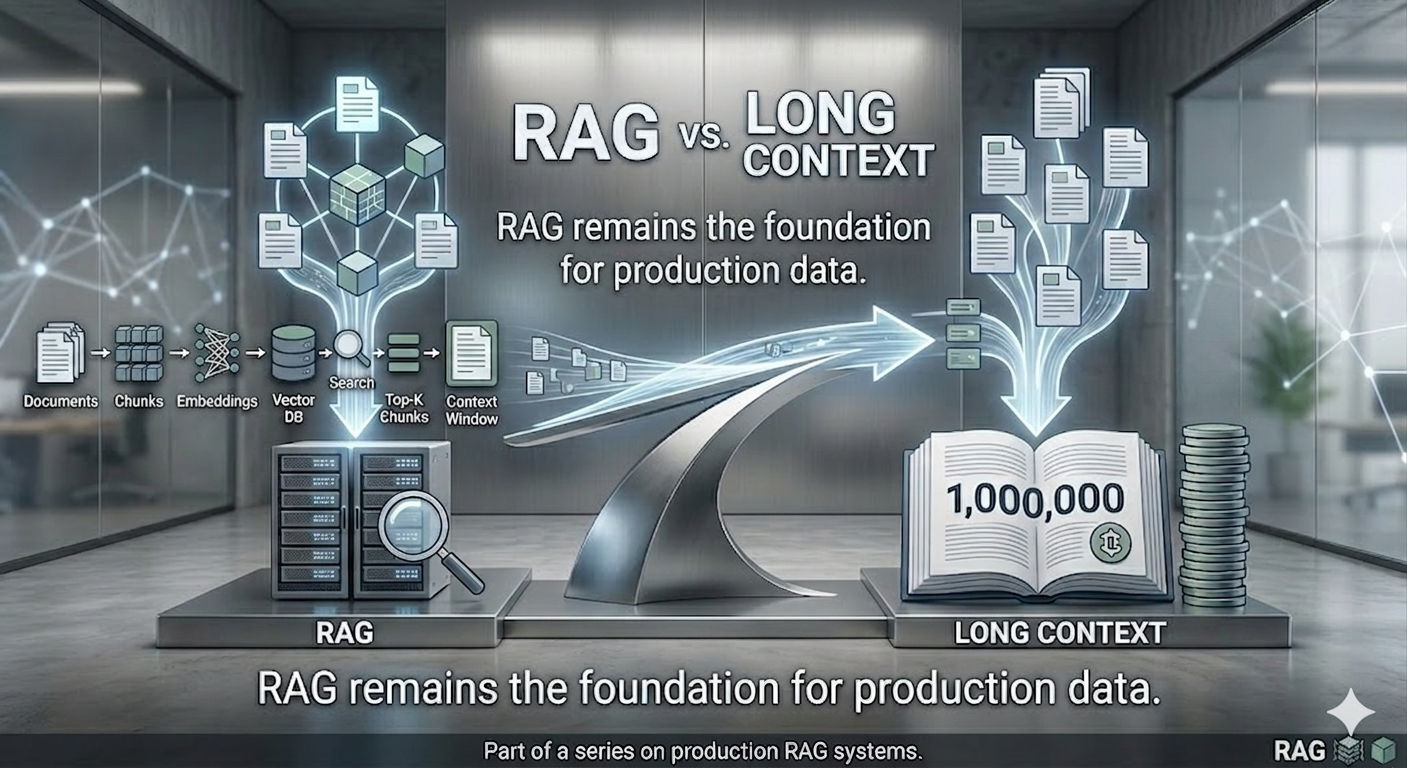

The idea is straightforward. Documents - PDFs, code files, internal reports, whatever needs to be searchable - are broken into smaller chunks and passed through an embedding model. That model converts each chunk into a vector: a numerical representation of its meaning. Those vectors are stored in a vector database.

When a query comes in, the same embedding model converts it into a vector and performs a semantic search - finding the chunks whose meaning is closest to the question. Those chunks are injected into the context window alongside the query, and the LLM generates an answer grounded in that retrieved context.

Documents → Chunks → Embeddings → Vector DB

↓

Query → Embedding → Semantic Search → Top-K Chunks → Context Window → Answer

The key constraint: RAG does not give the model everything. It gives the model the most relevant pieces. That filtering is both its strength and its fundamental risk (recall failure or retrieval miss).

The case against RAG - long context windows

For a long time, stuffing documents directly into the context window was not a realistic alternative. Early LLMs had context windows of around 4,000 tokens - enough for a few pages of text, not nearly enough for a knowledge base. RAG was effectively the only option.

That has changed. Some models now support context windows exceeding one million tokens. To put that in concrete terms: the entire Lord of the Rings series fits comfortably, with room to spare.

This shift makes a genuine argument possible. If the data fits directly in the context window, the embedding model, vector database, chunking strategy, and retrieval logic can all be removed. The architecture simplifies to: get the data, send it to the model. That is a meaningful reduction in moving parts.

Long context also eliminates what is sometimes called the retrieval lottery - the risk that the retrieval step fails to surface the right document even though it exists in the database. Semantic search is probabilistic. There are cases where it returns the wrong chunks, or misses the relevant passage entirely, without any visible error. Long context removes retrieval failure, but replaces it with attention-related failure modes

There is also a class of problem where long context is structurally better. Comparing a requirements document against a release document to find omissions requires both documents in full - not isolated snippets from each. RAG retrieves fragments. Long context delivers the complete picture.

Where RAG still wins

The long context argument is real, but it applies to a specific subset of use cases. Three constraints limit how far it generalises.

The infinite data problem. A context window of one million tokens sounds large. An enterprise data lake is measured in terabytes or petabytes. Even the largest context windows are a rounding error relative to the volume of data organisations actually need to make queryable. A retrieval layer is not optional when the dataset is effectively unbounded - it is the only mechanism for filtering what reaches the model.

The compute cost of rereading. Long context requires processing the full document on every query. A 500-page manual is roughly 250,000 tokens. Injecting it on every request means the model processes those 250,000 tokens every time, regardless of how small or targeted the question is. RAG shifts cost to indexing and retrieval, avoiding repeated full-document inference per query. For high-query-volume systems or frequently changing data, that difference in compute cost compounds quickly.

Attention dilution. Research on long context models consistently shows that attention quality degrades as context length grows. A specific paragraph buried in the middle of a 2,000-page document is often missed entirely, or details from surrounding text bleed into the answer. RAG narrows the signal by removing the noise - presenting the model with five relevant chunks rather than a million tokens of tangentially related content.

The honest answer on when to use which

Neither approach dominates. The right choice depends on the characteristics of the specific problem.

| Situation | Better fit |

|---|---|

| Bounded dataset, complex reasoning across the whole document | Long context |

| Analysing a single contract, summarising a specific book | Long context |

| Enterprise knowledge base, large or growing corpus | RAG |

| High query volume, cost-sensitive deployments | RAG |

| Frequently changing data where re-indexing is manageable | RAG |

| Need high answer reliability / grounding | RAG |

| Finding what is missing across two complete documents | Long context |

In practice, the two approaches are often not mutually exclusive. A system might use RAG to retrieve the most relevant documents from a large corpus, then inject those documents in full into a long-context window for deeper reasoning - combining the filtering strength of retrieval with the global reasoning capacity of long context.

Why this matters beyond the architectural debate

The “RAG vs long context” discussion is not just an infrastructure choice. It reflects something more fundamental about how LLMs are being deployed.

Private data - internal documents, proprietary systems, personal knowledge bases - was never on the public internet and never will be. Web search does not reach it. Long context windows do not create it. RAG is the retrieval layer for data that exists nowhere except where it was built.

That is the gap RAG was built for. The tooling has matured, the architectural patterns are well understood, and the failure modes - retrieval drift, silent failures, hallucination from noisy context - are increasingly well documented.

Long context windows have made the debate more nuanced. They have not made RAG unnecessary. For any system that needs to reason over private, large, or frequently updated data at production query volumes, the retrieval layer remains the practical foundation.

This post is part of a broader series on building and monitoring production RAG systems. The two-part series on instrumentation, observability, and CI gates for a production RAG pipeline covers what happens after the architecture decision is made - and why monitoring it matters as much as building it.

#RAG #LLM #AIEngineering #VectorDatabase #LongContext #GenerativeAI #MLOps #DataEngineering